Today in "Don't Trust AI to Tell You Facts": How several different "AI" programs messed up the simple fact of to whom I dedicated a book, and what that means for how much you should trust "AI" to tell you the truth about things (spoiler: not much at all):

Post

@scalzi

> You don’t blame an electric bread maker when some fool declares that it’s an excellent air filter

They put a bread maker next to the roll holder in toilets, in the glove compartment of new cars and in the book store across the street.

At some point you just have to snap and blame the bread maker.

RE: https://mastodon.social/@scalzi/115713494369759678

Per this by @scalzi , I got dismally wrong results from the Google AI, and didn't have the heart to search further, on a search for my favorite dedication, which is by Don Marquis for his archy & mehitabel compilation. It is:

dedicated to babs

with babs knows what

and babs knows why

por favor, I recently joined a group: ACEAI which has some former Better Business Bureau folks, including myself, who will evaluate AI being used by various companies. I just joined the Advisory Board due to my banking industry yrs. prior to joining BBB myself for 8 yrs. The intent is to attempt to help companies that will utilize AI to do so honestly, etc. Please keep us in mind. To be honest, AI is something I am NEW to. I know I hate Data Centers that steal our water & power. Thanks

@scalzi Prediction: by the 22nd century, the term "AI" will be obsolete.

It will be replaced with "PRE" (Pattern Recognition Engine) and it will be reduced in status to just one more tool in the computer science toolkit. But there will also be a cautionary footnote that its former companion technology PRACE (Pattern Recognition and Completion Engine) is now thoroughly discredited as generally not useful and often dangerous.

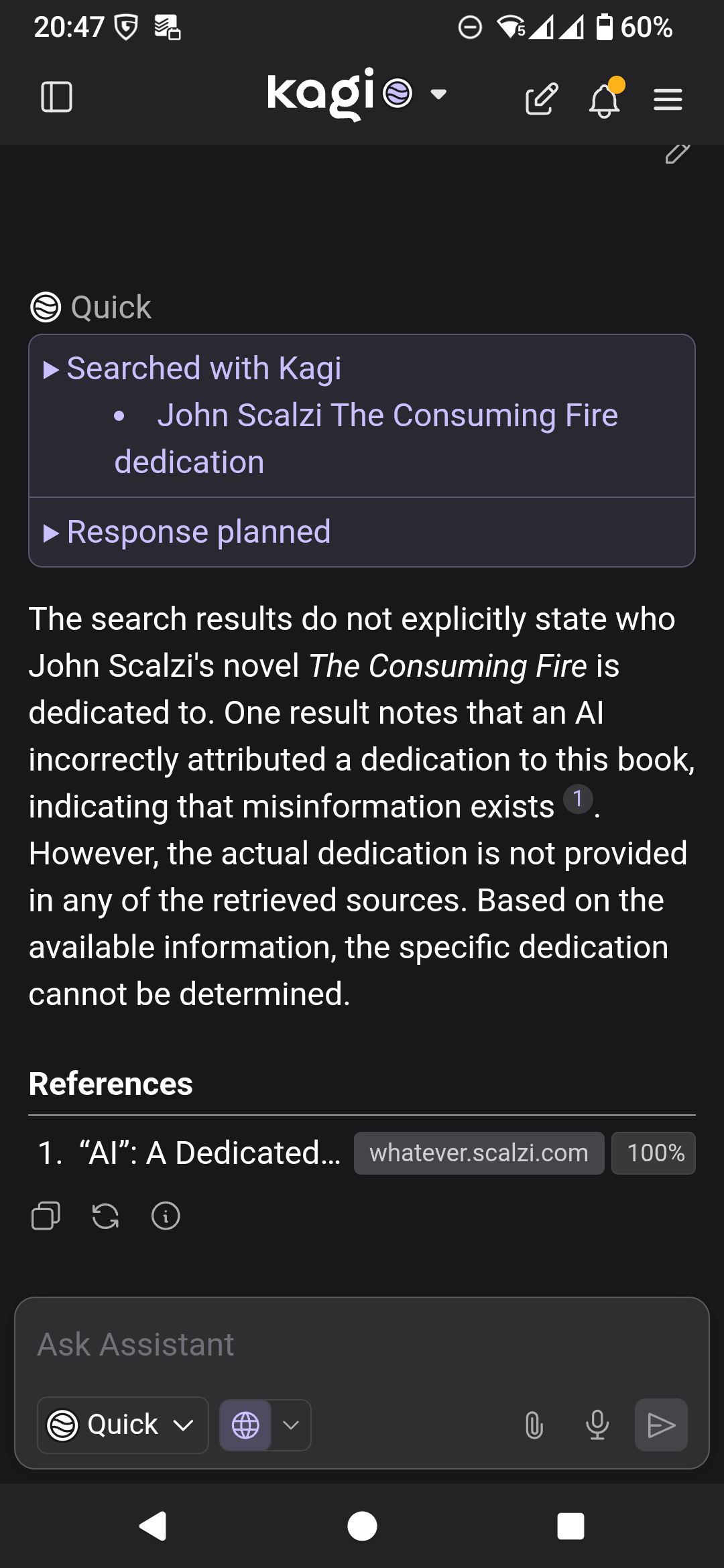

@scalzi so I thought I'd give this a go with Kagi Assistant, and hilariously, not only does it tell me it cannot work it out, but it informs me that this is a known question that AI gets wrong. Source: your own blog. Possibly because it also uses Claude.

I'm not sure I agree with it's conclusion that "misinformation exists" though, rather than this being a limitation of LLMs.

@scalzi This is a perfect example of why AI should be treated as a pattern generator, not an authority. It can sound confident while being factually wrong, especially on specific, human-level details. Useful for drafting and analysis—but trusting it as a source of truth is a category error.